When Nvidia revealed its superfast RTX 3000 series, it completely dominated all the GPUs right now present in the market. They are the fastest and most powerful graphics cards we could have until now. Surprisingly a $500 GPU from RTX 3000 series is directly competing with a $1200 GPU from the previous gen.

Here we are talking about the RTX 3070 which replaces the RTX 2070 from the RTX 20 series of graphics cards. Though the performance difference is significant, both must be compared in order to see what improvements Nvidia made in the RTX 3070.

Architecture

Perhaps the most significant change is the change of the architecture which play the most important role in changing the performance of a particular computer component. The first Ray-Tracing capable graphics cards we have are from 2018 which provided the unknown and infamous technique of projecting lights and shadows dynamically in real-time.

The 20 series RTX graphics cards used the Turing architecture which made it almost 20% faster than the older GTX 10 series graphics cards for the same price along with providing Ray-Tracing effects. However, the 20 series failed in providing good performance with Ray Tracing although they were beast in non-Ray-Traced games which are still the most common but the thing in which they had to excel was out of the game.

Related:- RTX 2080 Ti vs RTX 3090

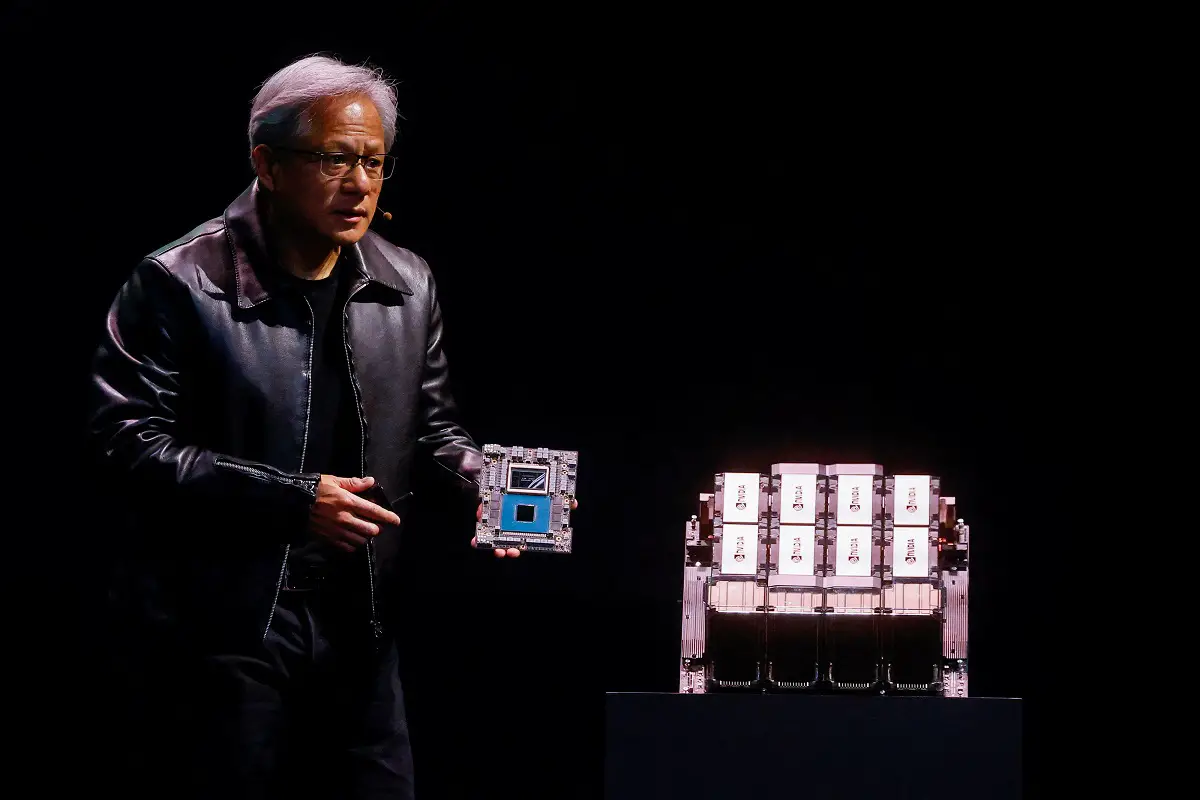

How RTX 3070 is faster through Ampere architecture

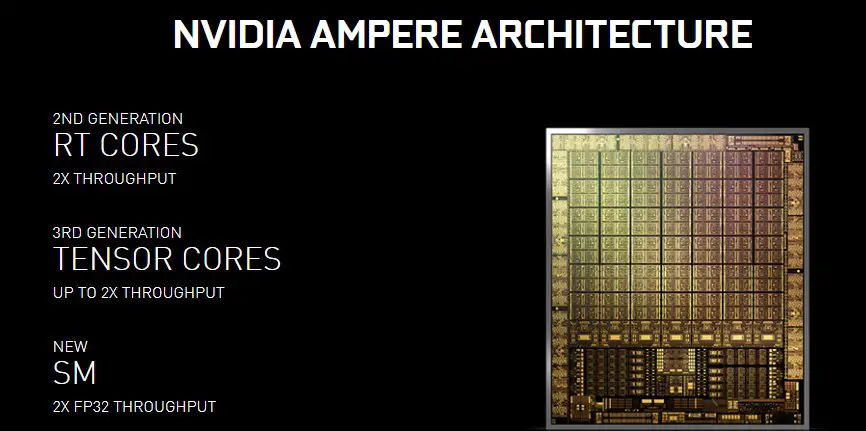

RTX 3070 uses the Ampere architecture which provides 2nd gen RT Cores and 3rd gen Tensor cores that are beneficial for bumping up the performance or fps numbers without any decrease in quality.

As the RTX 3070 features higher Tensor cores than 2070, it’s easy to accelerate the AI processes like Deep Learning Super Sampling(DLSS), AI Slow-Mo, AI Super-Rez and also matrix operations.

The Turing architecture on the RTX 2070 uses the 12nm process technology and the Ampere uses 7nm. The Ampere architecture comes with two new precisions: The Tensor Float (TF32) and Floating Point(FP64). While the TF32 works like FP32 which is available in the RTX 20 series cards, it speeds up the processes for AI by up to 20 times without any code change as said by Nvidia.

Specs

Nvidia never jumped so high in specs from one generation to another as it did right now. The RTX 3070 completely owns the RTX 2070 with specs that are doubled to make it compete with the RTX 2080 Ti.

Take a look at the chart below for a brief comparison between the specs of two:-

[wpsm_comparison_table id=”38″ class=””]As speculated earlier, the memory speed of the RTX 3070 will unfortunately not be 16Gbps but only 14Gbps which is equal to the memory speed of RTX 2070. This is confirmed from the Zotac Gaming Geforce RTX 3070 Twin Edge and Asus Dual RTX 3070 8G pages. Other specs which are changed include Cuda cores which are now more than double than that of RTX 2070 and Tensor Cores are increased by 1.27 times which means better performance with DLSS AI Acceleration.

Cooler Design

While there are AIB models available for both the RTX 2070 and 3070, the Founders Edition is what we have to look at in order to compare the cooling efficiency of each cooler. RTX 2070 uses a 2 slot medium-sized heatsink with dual axial 13 blade fans on the same side.

The RTX 3070 features a completely different design for heat dissipation. While it also features dual fans but the heat is eliminated from the aluminium heatsinks on the opposite sides. The fans work both as intake and exhaust fans and unlike the traditional heatsinks like on the RTX 2070, the hot air isn’t allowed to exit from all the sides.

Performance

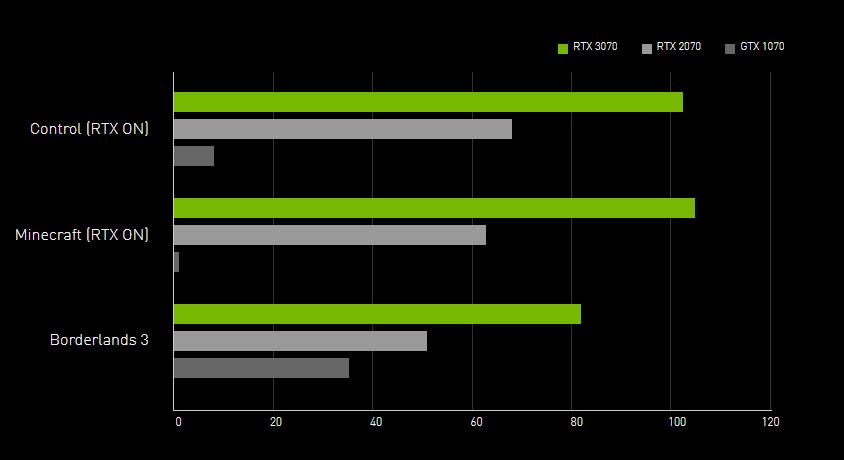

Performance is what makes the RTX 3070 a compelling choice over 2070. While the specs are theoretical, you can actually calculate or determine a theoretical real-world performance. As Nvidia claims, the RTX 3070 is roughly 60% faster than the RTX 2070 and also beats it not competing with the RTX 2080 Ti which is a $1200 card from the Turing series.

There are some benchmarks done by Nvidia officially and the graph on the RTX 3070 page shows how much it is faster than the 2070 and 1070. While these benchmarks cannot be trusted 100%, the chances that these are true are higher and when the reviewers will get their samples, we can clearly determine the accuracy of Nvidia’s claim.

Related:-

[box]